Winter OSINT Digest: OSINT for Body Identification, AI-Assisted Predictive Analysis, Generative AI and Supercharged Crime, and More…

It’s OSINT digest time again, and we’re putting the magnifying glass over everything that’s happened since the last quarter in the open-source intelligence and cybersecurity spheres.

In this issue, we discuss the role of OSINT in identifying the deceased, the rise of AI-driven predictive analysis and the pitfalls that come with it, how generative artificial intelligence is empowering criminals (and making life very difficult for security specialists), and so much more. Also, it wouldn’t be an OSINT digest without our term of the quarter.

Let’s see what’s what!

The US is currently dealing with a massive opioid problem. Fueled by increasing poverty, drug abuse is now resulting in worryingly high fatality numbers. Sadly, in many cases, those who’ve lost their lives stay unidentified, as public organizations are overloaded with identification procedures, and DNA tests are too costly to be run for every case.

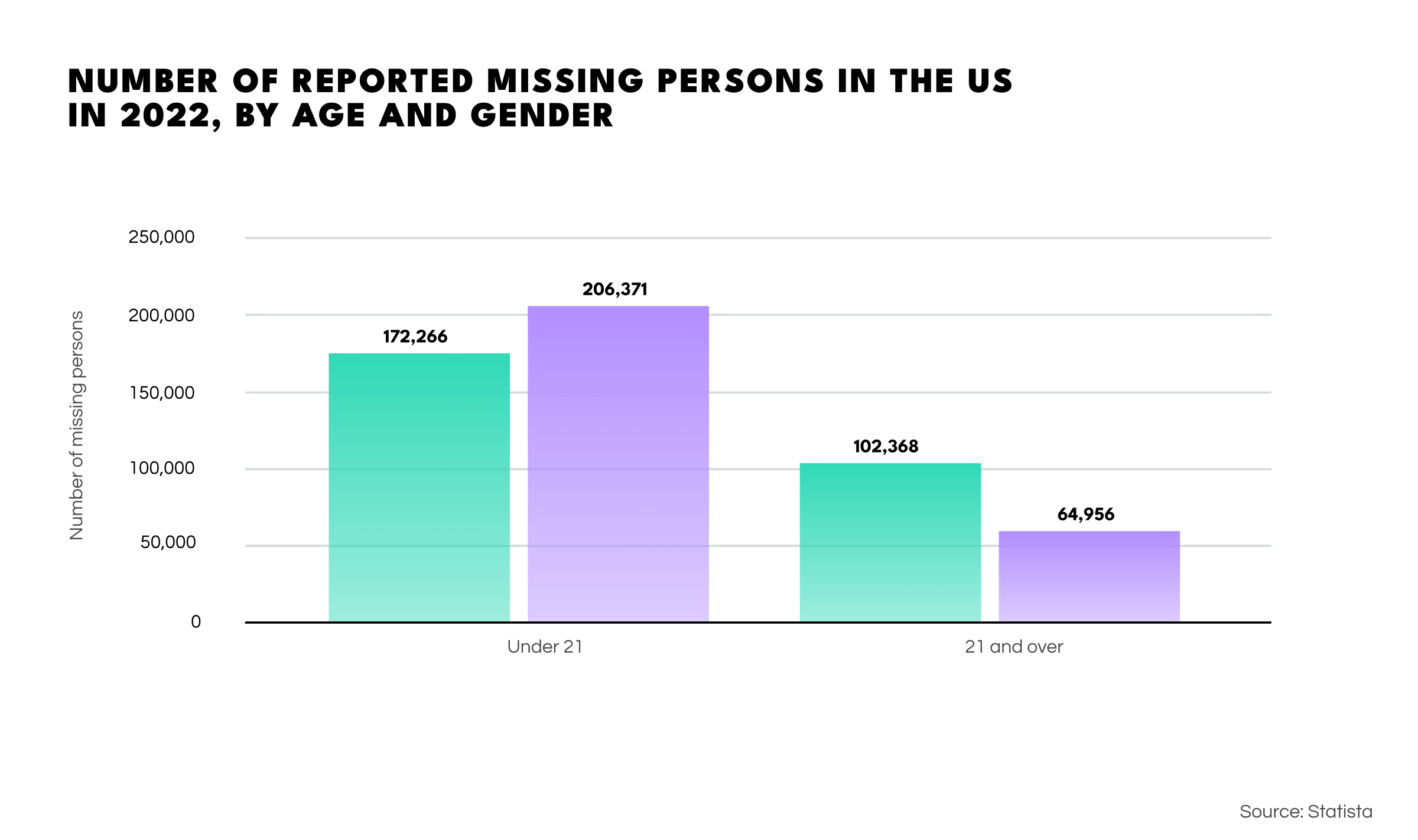

In 2022 alone, 665k people went missing in the US. Regrettably, the figures overall show a sharp increase in victims under the age of 21. The government has established a national database of missing and unidentified persons to combat this issue, giving supporting information and images of each subject to help identify cases.

However, the US government’s inability to tackle the crisis effectively has sparked a grassroots movement aimed at providing the lacking solution. Through a TikTok channel and Facebook group, volunteers are banding together to help identify the deceased. These amateur sleuths are using classic OSINT techniques, as well as facial recognition tools and online image collection sources, in an attempt to identify victims of the opioid epidemic.

However, a simple moral dilemma lies at the heart of this story. These volunteers are sifting through the Internet and public databases with automated systems to discover the identities of the deceased. What would happen if the system had a glitch and misidentified a living person as dead? This begs the question of moral responsibilities for OSINT providers—how the data they make available might impact ordinary people. Perhaps it’s time to create an OSINT code of conduct to prevent such things.

Open-source intelligence usually deals with the present or past—analysts look back at what has happened or watch what is currently unfolding, using whatever open data is to hand. But now, thanks to a giant leap in technology, some developers are working on tools that can collect and filter open data from massive amounts of information to project patterns into the future and anticipate events.

While such systems have proved to work well in finance, manufacturing, and healthcare, the big question is, can they be useful for state intelligence and law enforcement? The answer is yes, but it’s not a straightforward business.

Some tools aim to anticipate unexpected events, such as uprisings and even wars. CulturePulse, a predictive analytics startup, is currently working on a solution that already has the UN as a customer. The company can create a realistic “digital twin” of armed conflicts by studying historical, cultural, economic, and geographical data. The developer’s model considers over 80 categories from more than 50 million articles to predict potential conflicts based on traits like anger, anxiety, religious beliefs, and racism among the population.

The idea is to test how effective potential policies—like economic reform or political change—may be concerning conflict resolution. It might sound implausible, but the founders claim a 95% accuracy rate, and their system even predicted paramilitary tensions in Ireland after Brexit.

Switching gears, predictive analysis is making its way into law enforcement with ‘predictive policing.’ This refers to a system that uses historical criminal data and socio-economic analysis to predict where crimes are most likely to occur. It then arranges police patrols in these areas to preempt and prevent incidents, focusing mainly on street crimes and burglary.

Solid as this plan may seem, it’s not been as successful as was hoped. Used in 38 US cities, the system has fallen dramatically short of expectations with less than 1% accuracy. Critics have blamed this on a bias, claiming that the system relies too heavily on stereotypes like assuming higher crime rates in black and Latino communities. Similar issues are present in software used for gauging criminal potential in individuals.

This failure of AI-driven social forecasting seems to come down to the complexity of events and biases in the training data. Countless factors can influence an outcome, and the analysis process isn’t as straightforward as a flowchart. So, intelligence analysts don't need to worry about AI taking over their jobs (at least not yet).

Generative AI has opened up a world of possibilities for creativity and productivity, enabling the generation of all kinds of creative texts in multiple languages and realistic images and videos. However, with these technologies becoming accessible to everyone, darker types of applications are beginning to emerge. AI is now extensively used to create propaganda and disinformation that spreads quickly and virally, plus a range of online crimes.

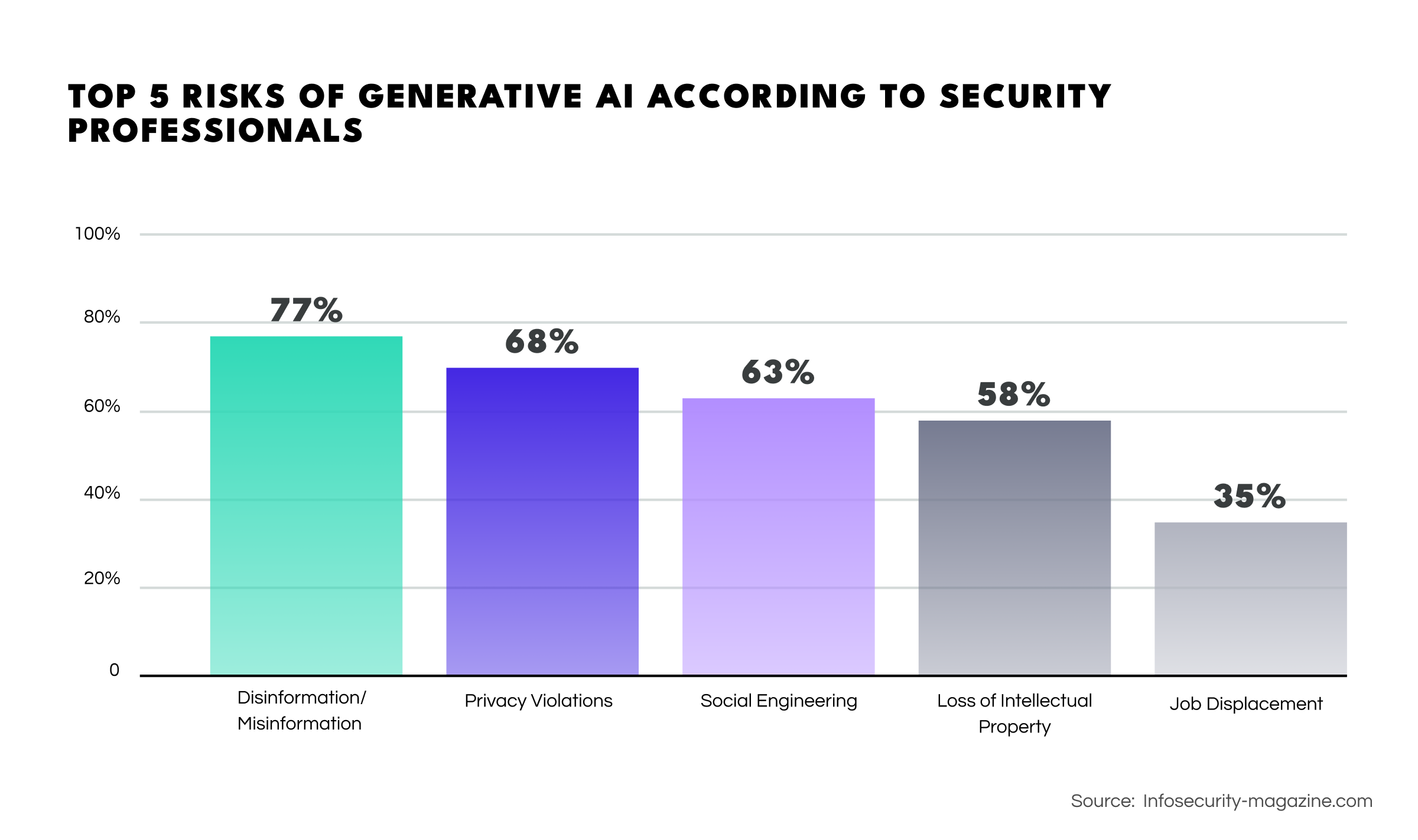

A survey conducted by the ISACA indicates that 77% of respondents see disinformation as the most significant risk posed by generative AI, followed by privacy violation (68%), social engineering (63%), loss of intellectual property (57%), and job displacement (35%). However, the actual risks are even more concerning than has been perceived.

Phishing. ChatGPT has become a tool for cybercriminals, leading to a 1265% increase in phishing campaigns in 2022. The system’s scalability allows fraudsters to generate phishing content much faster than would be possible manually. For instance, the time to draft a phishing email has been slashed from approximately 16 hours to 5 minutes.

Extremism and Terrorism. The spread of extremist ideas and terrorist propaganda has accelerated, driven by AI-generated content on social media. Efforts to create databases of identified extremist propaganda have struggled due to the massive influx of new material, with nearly 5000 new items per week.

Deepfake Porn. Nonconsensual deepfake porn has become a global digital-crime issue, with around 250k deepfake videos having appeared on popular adult content websites. The easy accessibility of deepfake porn creation tools has led to a 54% increase YoY, impacting both adults and children.

Child Sexual Abuse. More than 20k cases of AI-generated child sexual abuse materials have now been detected, with over half warranting legal action. While laws against deepfake content are emerging in the UK and US, the potential impact on the victim's mental health, social life, and employment is severe and can result in self-harm and suicide.

Challenges for AI-Makers. Creators of generative AI tools are continually refining algorithms to prevent them from being criminally misused. However, flaws exist. For example, ChatGPT can be tricked into revealing its initial training data when repeatedly prompted. Moreover, criminals also use jailbroken GPT spin-offs, like FraudGPT, for phishing, vulnerability identification, and hacking tuition.

While law enforcement agencies grapple with AI exploitation, the black hat market is thriving on AI-enhanced solutions, bombarding web investigators with misleading content. In such a climate, the best approach for the OSINT industry to distinguish between human and machine-generated content may be to fight AI with AI and employ machine-generated content detection algorithms.

This is a type of scam. More specifically, it’s a fraudulent strategy where scammers create a cryptocurrency, simulate positive trading activity to attract more investors, and then abruptly sell their holdings at an inflated price, leaving investors with a significantly devalued cryptocurrency.

There are two main types of rug pull scam:

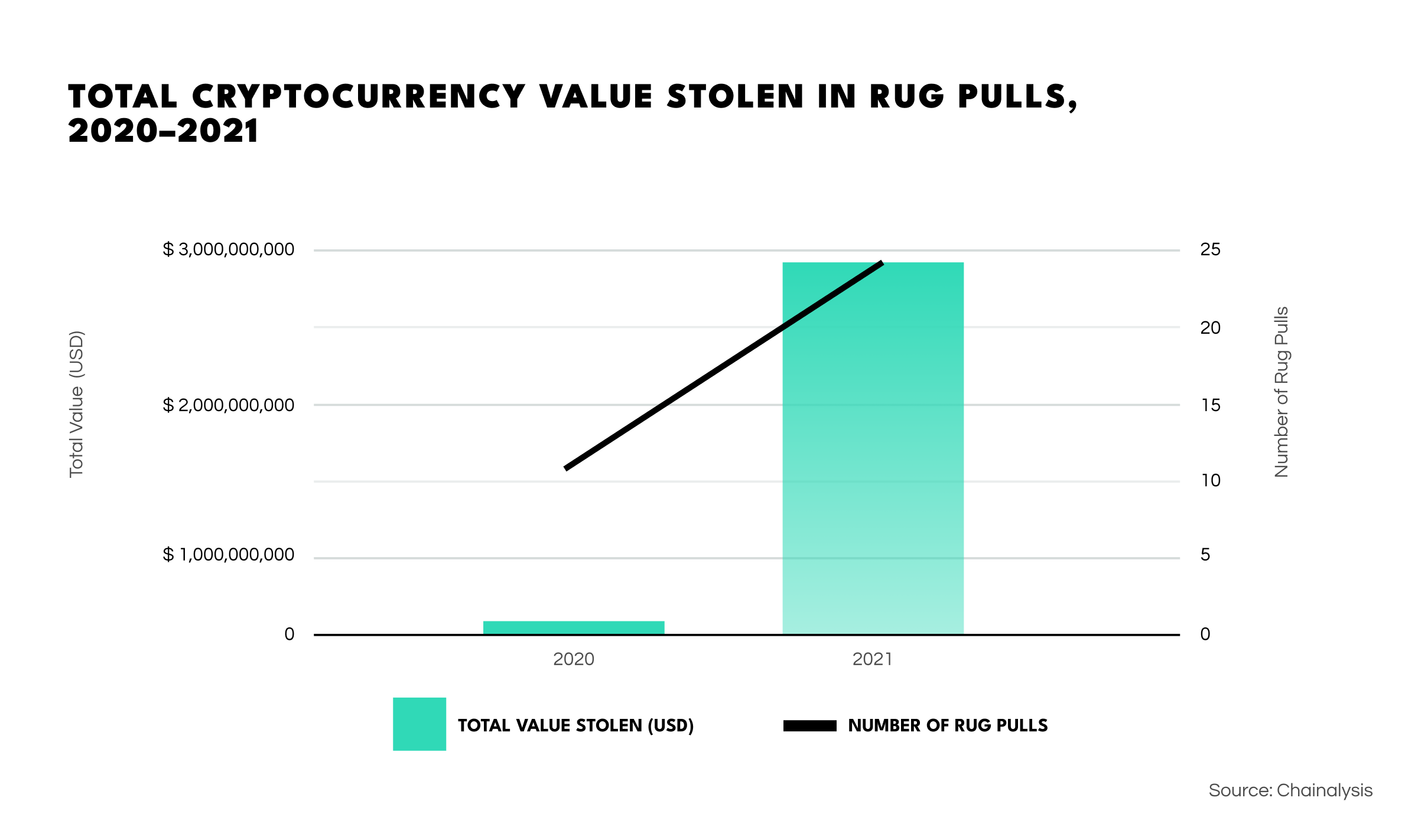

Such scams have already impacted many investors and more or less skyrocketed in frequency, resulting in cases where losses have exceeded $10M. More concerningly, in 2021 alone, rug pulls resulted in $2.8B of lost revenue for crypto investors.

The promise of good deals has led to a 237% increase in phishing scams. Fraudsters use brand logos and spoofed look-alike designs that seem legitimate to target unsuspecting shoppers with shady tactics to steal personal information. As a result, many people are falling victim to the threat actors’ tactics. Such scams tend to follow cycles, often intensifying in frequency during peak shopping seasons.

Intelligence chiefs at the Five Eyes alliance have warned of a notable increase in attempts by hostile states to steal intellectual property. The heads of intelligence agencies from the UK, Australia, Canada, the United States, and New Zealand presented five principles for businesses to adopt to enhance staff and information security. They emphasized the importance of vigilance and response, particularly in artificial intelligence, quantum computing, and synthetic biology.

Institutions are grappling with a staggering $206B in global financial crime compliance costs—surpassing 12% of the world's R&D expenditure. Despite 71% of financial crime professionals leveraging AI to enhance compliance procedures, persistent issues like data silos and outdated systems prevail. EMEA institutions face a 39.8% higher cost than the U.S./Canada due to escalating compliance intricacies.

In today's digital landscape, where algorithmic systems shape every facet of society, the shadows of injustice loom. Discriminatory algorithms in social media, job interviews, and criminal sentencing challenge regulatory mechanisms. The call for a platform-independent transparency framework, an "inspectability API," arises to empower individuals to scrutinize and export data from mobile apps, promoting accountability and understanding.

With Neo-Nazi groups such as the Tennessee Active Club now using Telegram for threat dissemination, the platform's role in aiding the growth of decentralized hate groups is in the spotlight. The group, disguised as a community promoting fitness and brotherhood, has seen explosive growth, with Telegram playing a pivotal role in recruitment and the spread of hate speech, giving radical groups of this kind a platform free of censorship.

Insider cyber threats have become a new danger to cybersecurity departments. The average cost of an insider cyberattack has risen by 40% since 2019, reaching an annual cost of $16.2M globally and an average cost per incident of $125k to $ 179k.

And that’s all for our winter OSINT digest! We hope you’ve now got a good idea of all the new developments in the sphere and are ready to tackle the new year armed with relevant knowledge.