Q3 OSINT News: A Battle of Acronyms, the BBC’s New OSINT Team, AI-Driven Disinformation, and More…

It’s digest time again! And the last quarter has certainly been an intriguing one. So, we’re here to share the stories that most caught our attention over the last three months and bring you up to speed with the latest twists and turns of the OSINT and cybersecurity spheres.

In this issue—a different meaning of the term OSINT (and what it may imply for the one we’re familiar with), the BBC’s new open-source intelligence unit, next-level disinformation created through generative AI, and much more. Not to mention our customary term of the quarter.

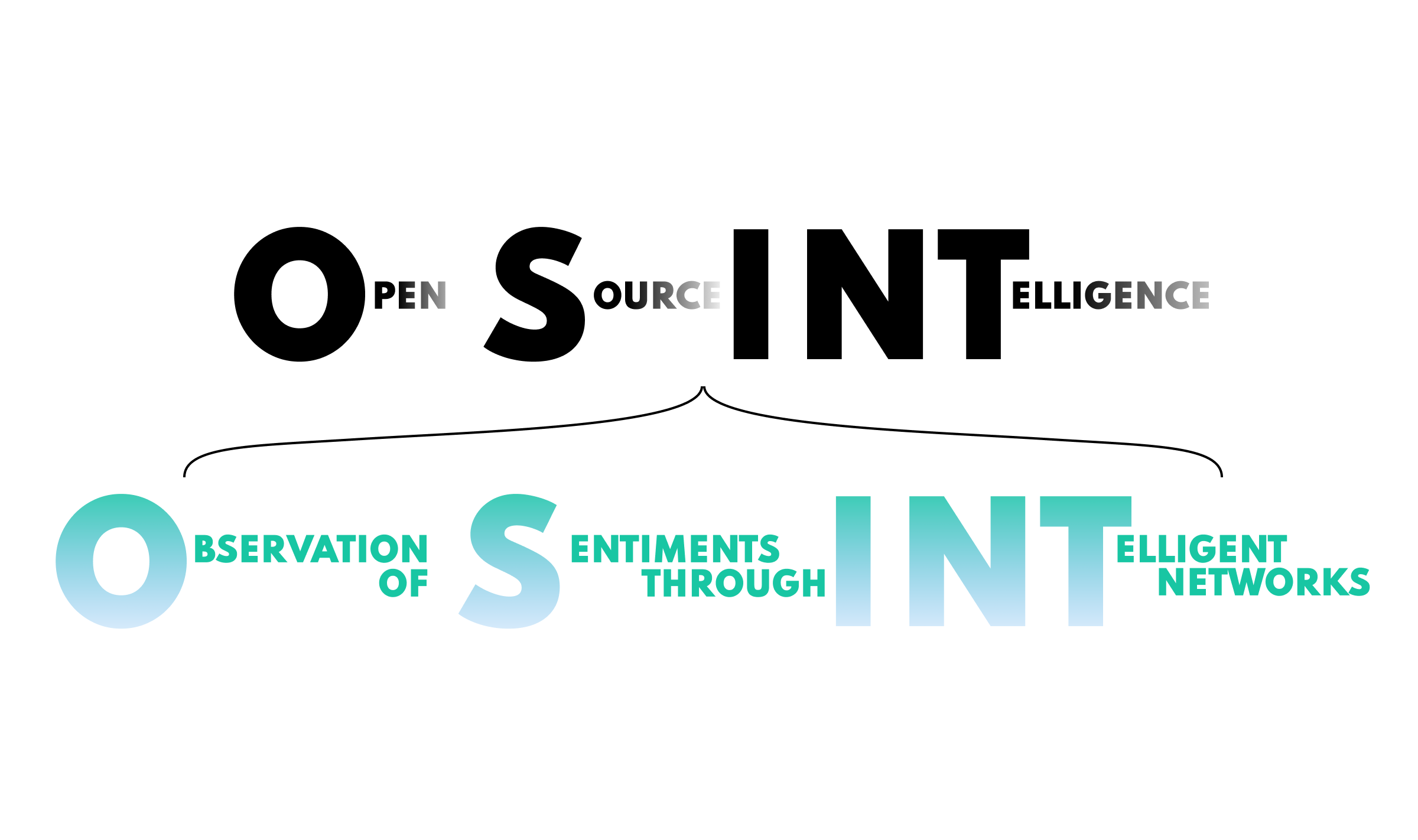

For anyone in the sphere, the acronym OSINT is part of the furniture. And it can only mean one thing—open-source intelligence—right? Well, not anymore. There’s actually now another term jockeying for ownership of this acronym—Observation of Sentiments through Intelligent Networks.

And what—you might ask—is that? It’s a discipline that employs different forms of AI—such as machine learning, natural language processing, and facial recognition—to assess the emotional output of a given text, image, video, or audio recording—the last of which is still a work-in-progress.

If you’re wondering what’s so impressive about that, it’s that the process goes way beyond determining a general emotional state such as anger, happiness, etc., to elaborate an entire emotional map of an individual as well as a roadmap of potential reactions to given information and scenarios. And it can be used in group behavioral studies.

Combining automated media analysis with psychological assessment, this new OSINT allows the good old standard OSINT to provide more scope for investigations, going beyond pure evidence collection to a more sophisticated level of intelligence craftsmanship enhanced by explicit and implicit behavioral analysis.

So, what are some of the most intriguing possibilities of this new discipline?

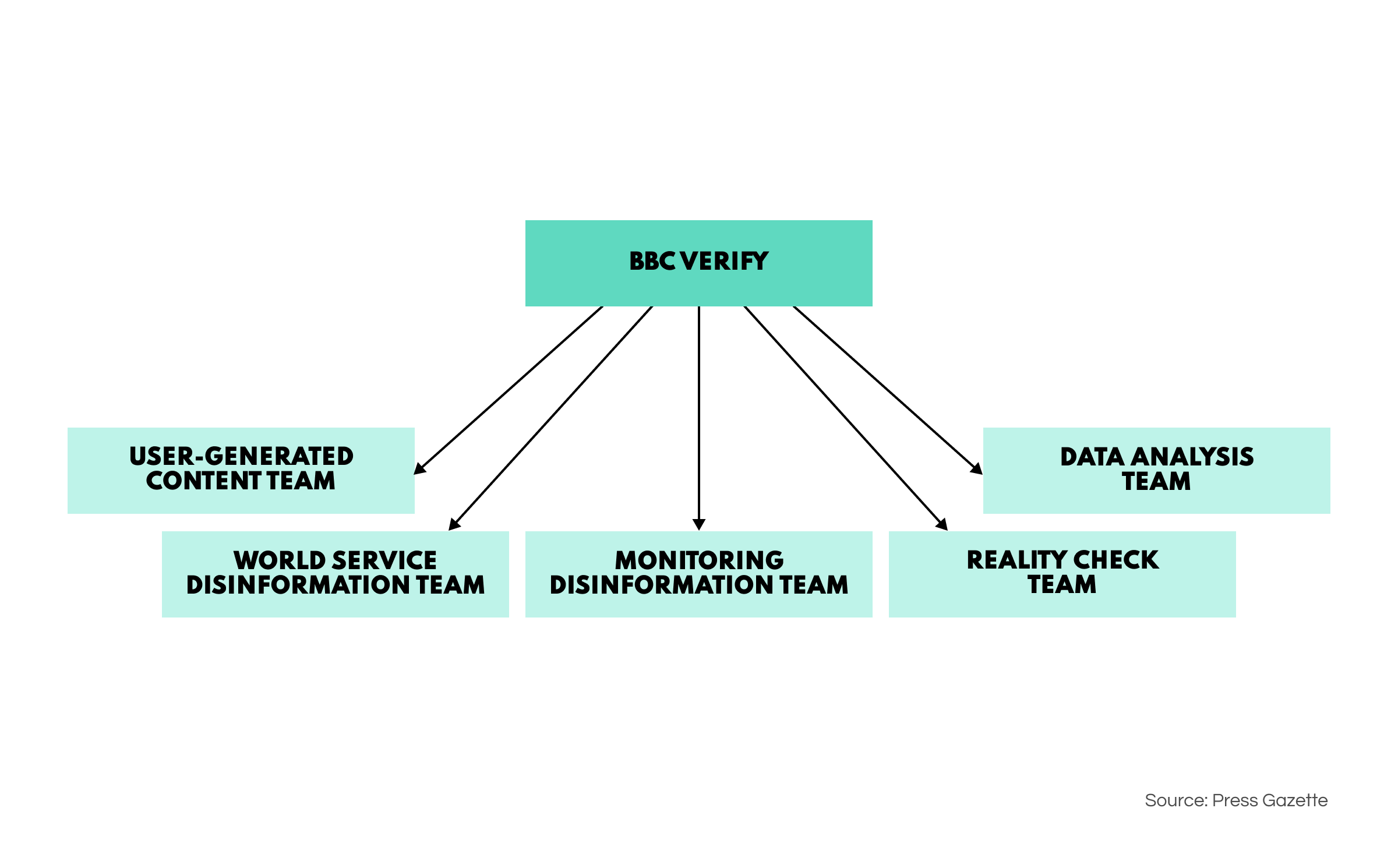

In response to a current climate awash with post-truth, deepfakes, and fake news, the news arm of the BBC has recently established its very own OSINT department. According to BBC’s official website, the special unit is called ‘Verify’ and comprises 60 journalists who specialize in forensic investigations and open-source intelligence.

The main goals of the OSINT unit include fact-checking, media intelligence and verification, disinformation identification, data analysis, and—of course—investigative journalism. The team has already published some of their work relating to economic analysis and fact-checking statements from the Prime Minister.

This actually represents something of a landmark for the OSINT community—showing the discipline has far outgrown being a supplementary tool for investigators but a major industry in itself where professionals are in high demand. OSINT has genuinely graduated from its niche origins to something of huge relevance for a range of spheres.

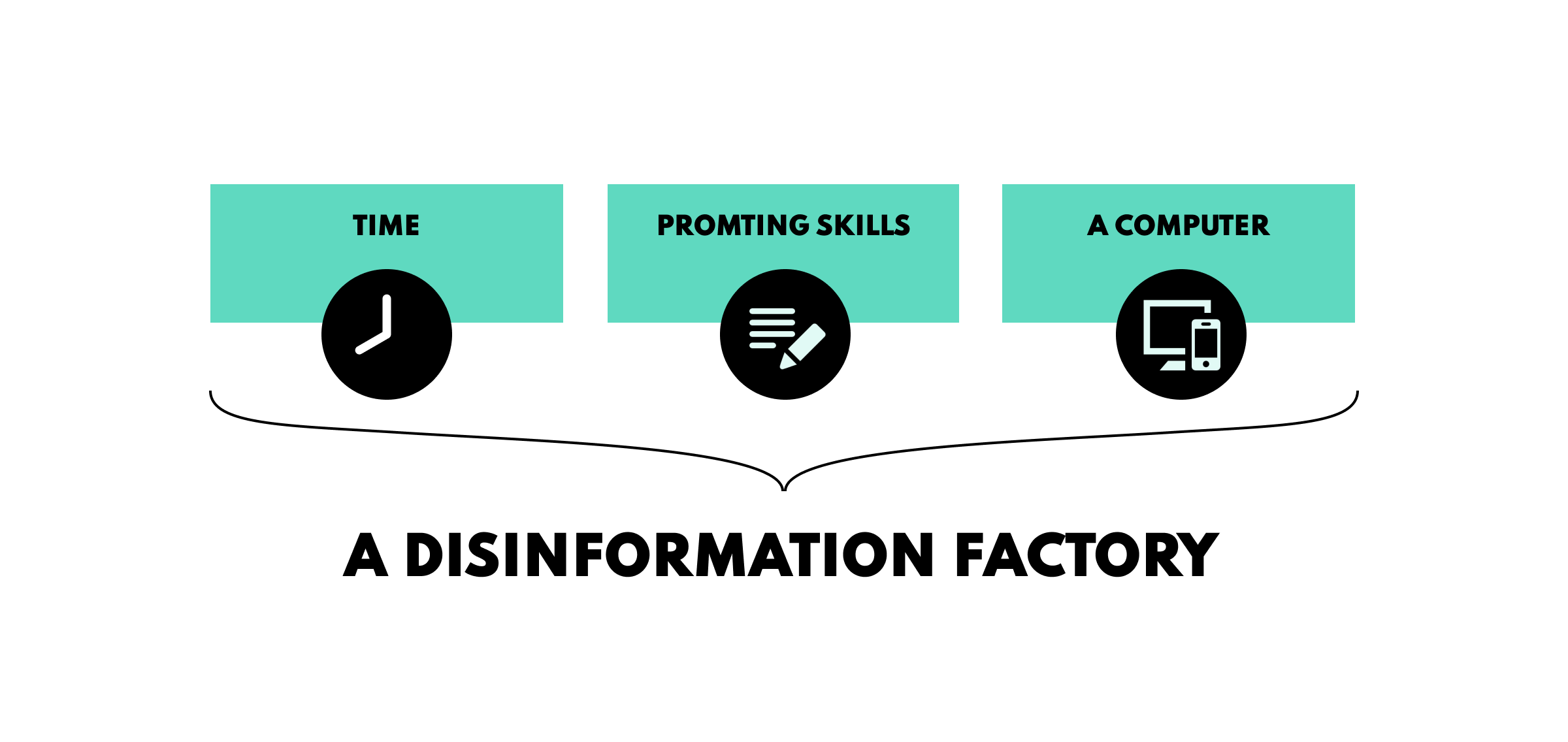

Since the 2016 US elections, the issue of disinformation and its various pitfalls has been a recurrent public concern. But now, with the advent of generative AI, the disinformation campaigns of the recent past may appear totally primitive against the new wave of deception heading for the informational space.

When we talk about disinformation campaigns, we generally mean the collective output of well-budgeted ‘PSYOP’ centers with teams of people working round-the-clock to create social media spin on political, social, and economic issues. However, due to a lack of specialist knowledge and English fluency, 90% of this content fails to convince.

But the tide is turning. With the help of ChatGPT and other generative models, disinformation creators needn’t boast a command of foreign languages or even a deep cultural understanding of their audience—they only need to be good at prompting. Such models can even tailor linguistic context to suit a given population or even community.

And the possibilities even reach beyond this. A skilled prompter can cook up a general article or set of posts, then very quickly modify this to create a range of variants, each suitable embellished to appeal to a particular audience. Work that once took hours or days can now be achieved in a matter of minutes.

In fact, anyone with sufficient spare time, moderate expertise in using generative AI tools, and a laptop can set up a disinformation center in their own home. According to WIRED, it costs only $400 to build a disinformation toolkit that can churn out fully-fledged disinformation campaigns and spread them across the internet.

So, cue the challenge for open-source research specialists—to develop a method for identifying and removing these more sophisticated disinformation campaigns before they go viral and affect public sentiment. And as the importance of this type of work gains more traction, you can expect to see an influx of OSINT products geared toward facilitating it. Watch this space.

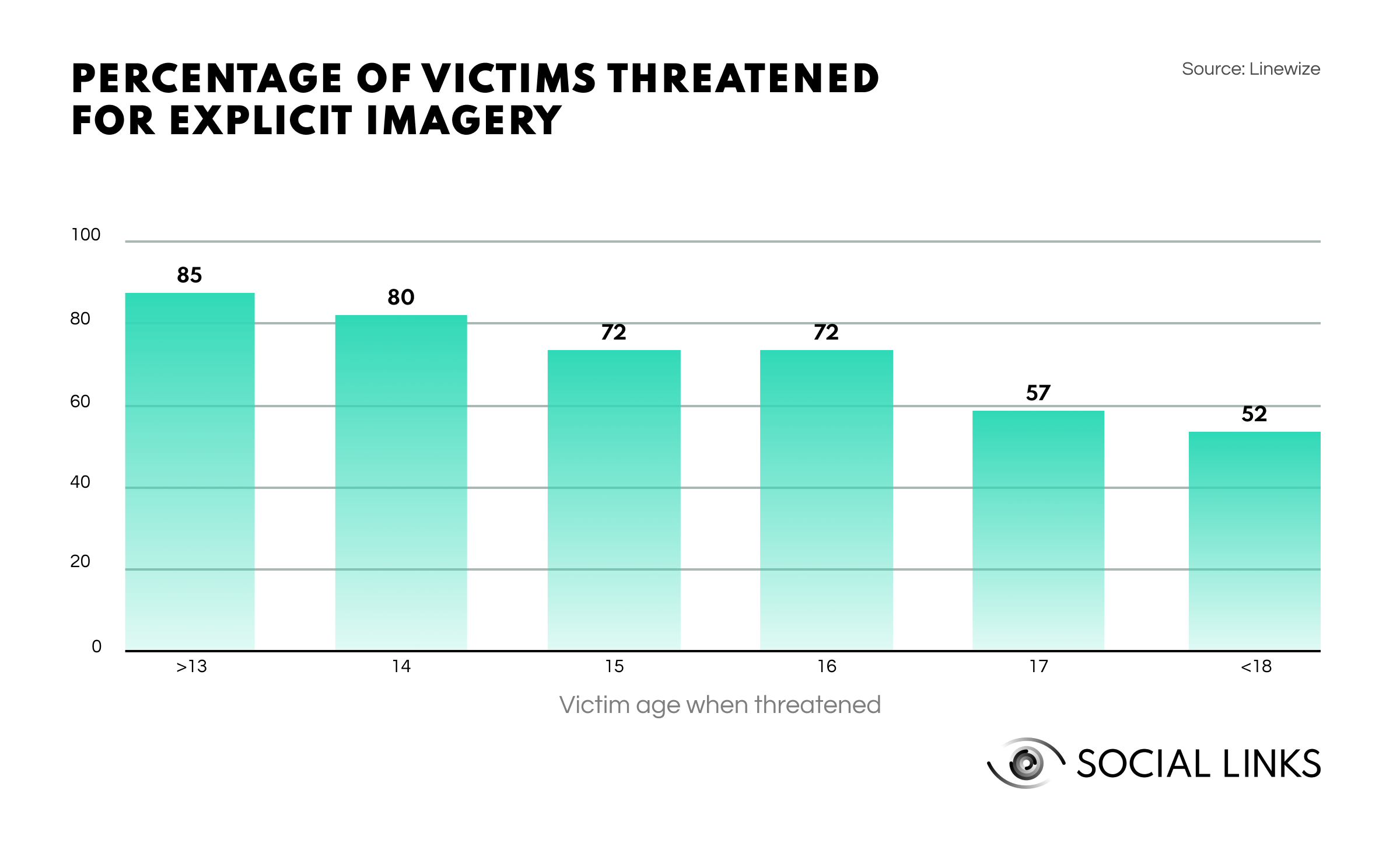

We have a new heavyweight contender in online blackmailing. The number of sextortion scams rose by 178% in 2023 to become one of the greatest threats posed via email and social media. The average payout for such extortion scams is $1260; in many cases, the extortionists don’t even possess any compromising material.

In recent news, a new example of this type of scam has come to light: extortionists were sending emails posing as the adult site YouPorn. The email explains that video footage of the recipient is ready to be uploaded to the site. However, this can be prevented if they pay for a ‘security plan’ ranging from $199 to $1399.

While conducting a one-off fraud ploy is not something too complicated, running a full-on campaign big enough to make the risk potentially worthwhile is a different matter—it requires no small amount of tech expertise, time and money. Since many would-be frauds lack the necessities, we are now seeing the emergence of a business model designed to monetize this situation—Fraud as a Service.

Much like Software as a Service, which is a subscription-based model for using a program, Fraud as a Service, or FaaS, offers subscribers access to sensitive data tactic guides, allowing them to carry out their own large-scale fraud campaigns. These fraud packages aren’t limited to a single approach but often offer a wide menu of operations subscribers can choose between.

The Global Investigative Journalism Network has just published its Guide to Investigating Digital Threats: Trolling Campaigns. The article looks into some classic trolling techniques, including amplification through social media, hashtag highjacking, emotional manipulation, astroturfing, deepfakes, and more.

A mobile app designed to help users flag up fake profiles on social media, thereby accelerating their removal, has recently been launched. This comes partly in response to warnings from MI5 claiming that over the last five years, 10,000 British nationals had been contacted by foreign agents posing behind alias accounts.

A new article from Security Week outlines the importance of inter-sector cooperation when it comes to threat intelligence. The piece argues that, while threat actors only need to find one gap to exploit an entire organization, cybersecurity units need to be aware of all hazards, meaning partnerships and cooperation are essential to quality threat intelligence.

When her mother disappears, a young woman starts to investigate from her home computer using any resources she can get her hands on. As the plot thickens, our protagonist’s digital investigation starts to raise more questions than it answers…

And that wraps up our digest for the third quarter of 2023. We hope you enjoyed it. Keep your ear to the ground for all things OSINT by subscribing to our blog.